IBM DataPower Operations Dashboard v1.0.8.5

A newer version of this product documentation is available.

You are viewing an older version. View latest at https://ibm.biz/dpod-docs.

High Availability, Resiliency or Disaster Recovery

High Availability (HA), Resiliency or Disaster Recovery (DR) Implementation

There are multiple methods available to achieve DPOD HA/DR planning and configuration. These methods are determined based on the customer's requirements, implementation and infrastructure.

Terminology

Node State/mode - A DPOD node can be in one of the following states: Active (On, performing monitoring activities), Inactive (Off, not performing any monitoring activities), DR Standby (On, not performing monitoring activities).

Primary Node - A DPOD installation that actively monitors DataPower instances under normal circumstances (Active state).

Secondary Node - A DPOD installation, identical to the Primary Node (In shared storage scenario it is the same image as the primary node) - that is in DR Standby or Inactive state.

3rd party DR software - A software tool that assists in the process of identifying when the primary node state has changed from active to inactive and initiates the process of launching the secondary node as active .

DPOD Scalability vs. HA/DR

The DPOD architecture supports installation of multiple DPOD nodes for scalability - to support high throughput in cases of high rate of transactions per second (TPS). However, this does not provide a solution for HA/DR requirements.

For simplicity, this document assumes that only one DPOD node is installed, but the same scenarios and considerations apply for multiple nodes installations.

Important HA/DR Considerations

Consult your BCP/DR/System/Network Admin and address the following questions before selecting which method(s) of HA/DR implementation with DPOD to use:

1. For large installations, DPOD can capture vast volumes of data. Replicating that much data for DR purposes may consume significant network bandwidth, and may incur 3rd party storage replication license costs.

Is it cost effective to replicate DPOD's data, or is it acceptable to launch another instance of DPOD with configuration replication only?

2. The software used for Active/Passive scenario:

Will you run DPOD on a virtual infrastructure like VMware, or can you use VMware VMotion or Active/Passive cluster management tools that can help identify and relaunch DPOD on a different cluster member?

3. You are expected to have an Active/Passive software or another mechanism in place to identify when a DPOD node becomes inactive, and launch a new one in an active cluster member.

Do you have such a tool (DR software)?

4. When launching a new DPOD instance on the backup cluster member:

Will the new instance keep the same network configuration of the primary instance (for example: IP Address, DNS, NTP, LDAP, SMTP) or will the configuration change?

5. Some DataPower architecture solutions (Active/Passive or Active/Active) effect DPOD configuration. If the DataPower IP address changes - then your DPOD configuration may need to change.

Does your DataPower architecture use an active/passive deployment? If so - will the passive DataPower have the same IP address when it switches to active?

Common Scenarios for DPOD HA/DR Implementation

Scenario A: Active/Passive - DPOD's IP Address remains the same - Shared Storage

Assumptions:

- The customer has DataPower appliances deployed using either an Active/Passive, Active/Standby or Active/Active configuration. All DataPower appliances in any of these configurations have unique IP addresses.

- The customer has storage replication capabilities to replicate DPOD disks based on the disks’ replication policy described above.

- A primary DPOD node is installed, and is configured to monitor all DataPower appliances (active, standby and passive). The secondary node will use the same disks on shared storage.

- All DPOD network services (NTP, SMTP, LDAP etc.) retain the same IP addresses in a failover event (or else a post configuration script is required to be run by the DR software).

- The customer has a 3rd party software tool or scripts that can:

- Identify unavailability of the primary DPOD node.

- Launch a secondary DPOD node using the same IP address as the primary one (usually on a different physical hardware).

- The secondary DPOD node is not operating when business is as usual, as disks replication is required and the secondary node has the same IP address as the primary DPOD node.

- This scenario might not be suitable for high load implementations, as replication of DPOD data disk might not be acceptable.

During a disaster:

- The customer's DR software should Identify a failure in the DPOD primary node (e.g. by pinging an access IP, sampling the user interface URL or both).

- The customer's DR software should launch the secondary DPOD node using the same IP address as the failed primary node (or initiate changing the IP address if not already configured that way).

DPOD will be available in the following way:

- As the secondary DPOD node has the same IP address, all DataPower appliances will be able to access it.

- Since all DataPower appliances will have the same IP addresses - DPOD can continue to sample them.

- Since the secondary DPOD node has the same IP address as the primary one, access to DPOD's console retains the same URL.

Scenario B: Active/Passive – DPOD's IP Address changes - Shared Storage

Assumptions:

- The customer has DataPower appliances deployed using either an Active/Passive or Active/Stand-by configuration. All DataPower appliances in any of these configurations have unique IP addresses.

- The customer has storage replication capabilities to replicate DPOD disks based on the disks’ replication policy described above.

- A primary DPOD node is installed, and is configured to monitor all DataPower appliances (active, standby and passive). The secondary node will use the same disks on shared storage.

- All DPOD network services (NTP, SMTP, LDAP etc.) retain the same IP addresses in a failover event (or else a post configuration script is required to be run by the DR software).

- The customer has a 3rd party software tool or scripts that can:

- Identify unavailability of the primary DPOD node.

- Launch a secondary DPOD node using a different IP address to the primary one (usually on a different physical hardware).

- The secondary DPOD node is not operating when business is as usual, since disks replication is required.

- This scenario might not be suitable for high load implementations, as replication of DPOD data disk might not be acceptable.

During a disaster:

- The customer's DR software should Identify a failure in DPOD's primary node (e.g. by pinging an access IP, sampling the user interface URL or both).

- The customer's DR software should launch the secondary DPOD node using a different IP address to the failed primary node (or initiate changing the IP address if not already configured that way).

- The customer's DR software should execute a command/script to change DPOD's IP address.

- The customer's DR software should change the DNS name for the DPOD node's web console to reference an actual IP address or use an NLB in front of both DPOD web consoles.

- The customer's DR software should disable all DPOD log targets, update DPOD host aliases and re-enable all log targets in all DataPower devices. This is done by invoking a REST API call to DPOD.

(See "refreshAgents" API under Devices REST API).

DPOD will be available in the following way:

- Although the secondary DPOD node has a different IP address, all the DataPower appliances will still be able to access it since their internal host aliases pointing to DPOD will be replaced (step 5 above).

- As all DataPower appliances retain the same IP addresses - the secondary DPOD node that was just made active can continue to sample them.

- Although the secondary DPOD node has a different IP address, all users can access DPOD’s web console because its DNS name has been changed or it is behind an NLB (step 4 above).

Scenario C: Active/Standby – Two separate DPOD installations with no shared storage

Assumptions:

- The customer has DataPower appliances deployed using either an Active/Passive or Active/Stand-by configuration. All DataPower appliances in any of these configurations have unique IP addresses.

- Two DPOD nodes are installed (requires DPOD version 1.0.5 +), one operates as the Active node and the other one as Standby. After installing the secondary DPOD node, it must be configured to run in Standby state. See "makeStandby" API under DR REST API.

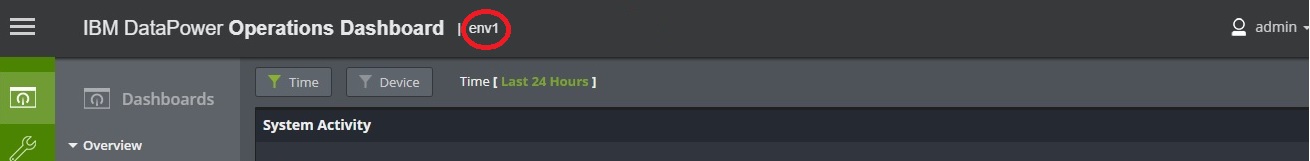

- Both DPOD nodes should have the same environment name. The environment name is set by the customer during DPOD software deployment or during upgrade, and is visible in the top navigation bar (circled in red in the image below):

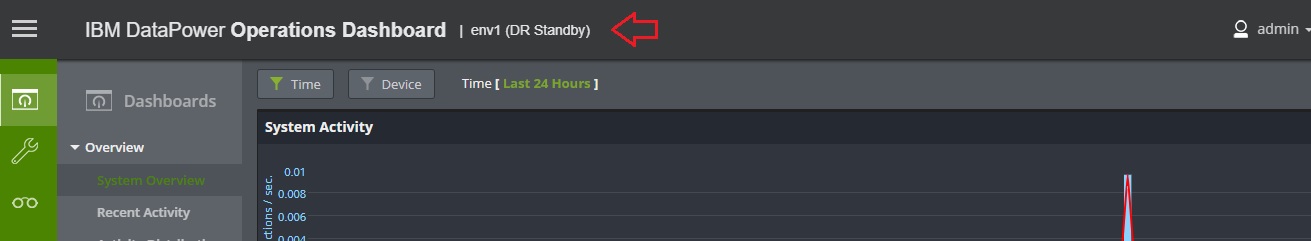

- When the DPOD node is in a DR Standby mode, a label is displayed next to the environment name in the Web Console. A refresh (F5) may be required to reflect recent changes if the makeStandby API has just been executed, or when the DPOD status has changed from active to standby or vice versa. See the image below:

- As both nodes are up, no configuration or data replication can exist in this scenario. The customer is expected to configure each DPOD node as a standalone including all system parameters, security groups / roles/ LDAP parameters/ Certificates, custom reports and reports scheduling, custom alerts and alerts scheduling, maintenance plan and user preferences. DPOD is not performing any configuration synchronization.

- Importantly, the customer must add DataPower instances to each installation in order to monitor all DataPower Devices (active, standby and passive). Starting with DPOD v1.0.5 a new REST API may be utilized to add a new DataPower device to DPOD without using the UI (see Devices REST API). The customer must add DataPower instances to the standby DPOD node and set the agents for each device from the Device Management page in the web console (or by using the Devices REST API). Setting up the devices in the standby DPOD node will not make any changes to the monitored DataPower devices (no log targets, host aliases or configuration changes will be made).

- All DPOD network services (NTP, SMTP, LDAP etc.) have the same IP addresses.

- The customer has a 3rd party software tool or scripts that can:

- Identify unavailability of the primary DPOD node.

- Change the state of the secondary node (that is in standby state) to Active state

- The standby DPOD node can still be online as disk replication is not required.

- This scenario will not provide High Availability for data. To load data from the Primary node, the customer is required to restore backups taken from primary nodes.

- During state transition of the Secondary DPOD from Active back to Standby there might be some data loss.

During a disaster:

- The customer's DR software should Identify a failure in DPOD primary node (e.g. by pinging an access IP, sampling the user interface URL or both).

- The customer's DR software should enable the standby DPOD node by calling the "standbyToActive" API (see DR REST API). This API will point DPOD's log targets and host aliases of the monitored devices to the standby node and enable most timer based services (e.g. Reports, Alerts) on the secondary nodes.

- The customer's DR software should change the DNS name for the DPOD node's web console to reference an actual IP address or use an NLB in front of both DPOD web consoles.

DPOD will be available in the following way:

- Although the secondary DPOD node has a different IP address, all the DataPower appliances will still be able to access it since their internal host aliases pointing to DPOD will be replaced (step 2 above).

- As all DataPower appliances retain the same IP addresses - DPOD can continue to sample them.

Although the secondary DPOD node has a different IP address, all users can access DPOD’s web console because its DNS name has been changed or it is behind an NLB (step 3 above).

All Data from the originally Active DPOD will not be available!

In a "Return to Normal" scenario:

- Right after re-launching the primary node, make a call to the "standbyToInactive" API (see DR REST API) to disable the standby node.

- Call the "activeBackToActive" API (see DR REST API) to re-enable the primary node. This will point DPOD's log targets and host aliases on the monitored devices back to the primary DPOD node.

- The customer's DR software should change the DNS name for the DPOD node's web console to reference an actual IP address or use an NLB in front of both DPOD web consoles.

- During state transition of the Primary node from Active to Standby there might be some data loss.

Scenario D: Limited Active/Active – Two separate DPOD installations with no shared storage

Assumptions:

- The customer has DataPower appliances deployed using either an Active/Passive, Active/Active or Active/Stand-by configuration. All DataPower appliances in any of these configurations have unique IP addresses.

- Two DPOD nodes are installed (both are v1.0.5+), both running in Active state.

- Both DPOD nodes must have different environment names. The environment name is set by the customer during DPOD software deployment, and is visible at the top navigation bar .

- Both DPOD nodes are configured separately to monitor all DataPower Devices (active, standby and passive). Starting with DPOD v1.0.5 a new REST API may be utilized to add a new DataPower device to DPOD without using the UI (see Devices REST API). As both nodes are up, no configuration replication can exist in this scenario.

- As both nodes are up, no data replication can exist in this scenario. The customer is expected to configure each DPOD node as a standalone deployment, including all system parameters, security groups / roles/ LDAP parameters / Certificates, custom reports and reports scheduling, custom alerts and alerts scheduling, maintenance plan and user preferences. DPOD is not performing any configuration synchronization.

- Importantly, the customer must add DataPower instances to each installation to monitor all DataPower Devices (active, standby and passive). Starting with DPOD v1.0.5, a new REST API may be utilized to add a new DataPower device to DPOD without using the UI (see Devices REST API). The customer must add DataPower instances to the standby DPOD node and set the agents for each device from the Device Management page in the web console (or by using the Devices REST API). Setting up the devices in the standby DPOD node will not make any changes to the monitored DataPower devices (no log targets, host aliases or configuration changes will be made).

- All DPOD network services (NTP, SMTP, LDAP etc.) have the same IP addresses.

- The customer added DataPower devices to the standby DPOD node and set the agents for each device from the Device Management page in the web console (or by using the Devices REST API). The customer is expected to replicate all configurations and definitions for each installation. DPOD replicates neither data nor configurations/definitions.

- Important! - Since the two installations are completely independent and no data is replicated - data inconsistency may follow, as one may capture information while the other is in Down state for maintenance or even started in different time. This might affect reports and alerts.

- Important! - Each DPOD installation will create 2 log targets for each domain. If one DataPower is connected to 2 DPODs - then for each domain you will need 4 log targets. As DataPower have a limitation of ~1000 log targets starting FW 7.6, the customer must take care to not reach the log targets limit.

- All logs and information will be sent twice over the network thus network bandwidth will be doubled !

During a disaster:

- No action is required. The DataPower instance will push data to both instances.

DPOD will be available in the following way:

- The active node will continue to operate as it was operating before.

- All users can access DPOD’s web console because its DNS name has been changed or it is behind an NLB as it was accessible before the disaster.

- Note - Some Data from the originally Active DPOD will not be available

In a "Return to Normal" scenario:

- No action is required. The DataPower instance will push data to both instance

- The data gathered throughout the disaster period can not be synced back to the recovered node

Backups

To improve product recovery, an administrator should perform regular backups as described in the backup section.